|

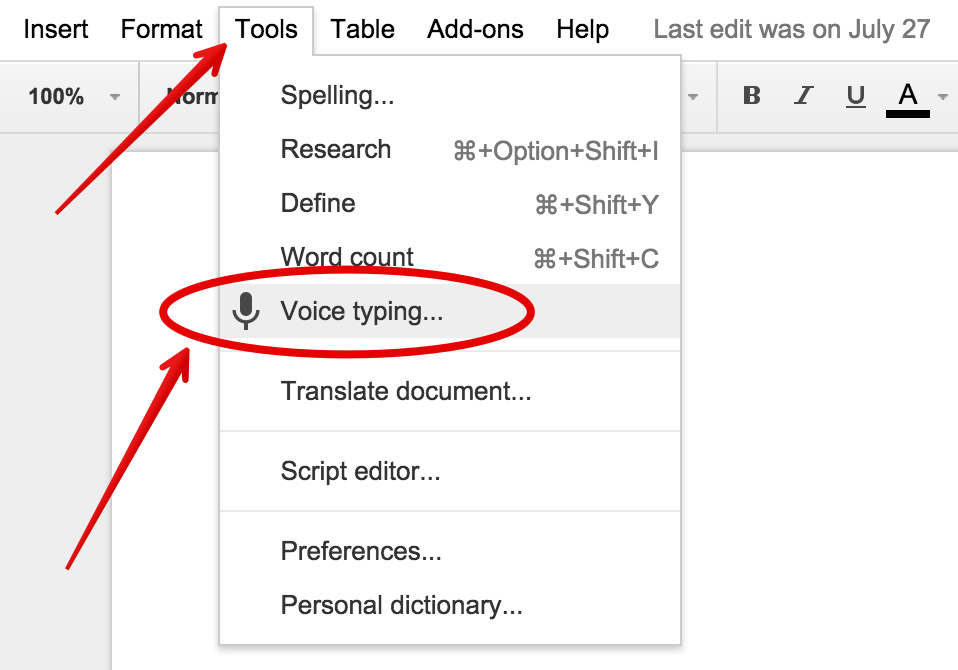

12/25/2023 0 Comments Transcribe speech to text google docs

This means that when the software is struggling with audio quality or interpreting an accent, the transcription quality can suffer quite a bit.Īll that said, getting better results from Microsoft’s software is dependent on using high-quality speech and acoustic models.

Google largely sticks to recognizing words based on their audio signatures and stringing them together. Since this software can accept custom speech models, it also handles accents, lisps, and other speech impediments significantly better than Google’s Speech-to-Text platform. The difference is that Microsoft’s software uses AI to make sure that what it’s transcribing makes linguistic sense. Performanceįor straightforward audio transcription, Microsoft Azure Speech Service tends to perform better than Google Cloud Speech-to-Text. So, you can easily use either of these speech-to-text apps for transcribing meetings and conference calls.

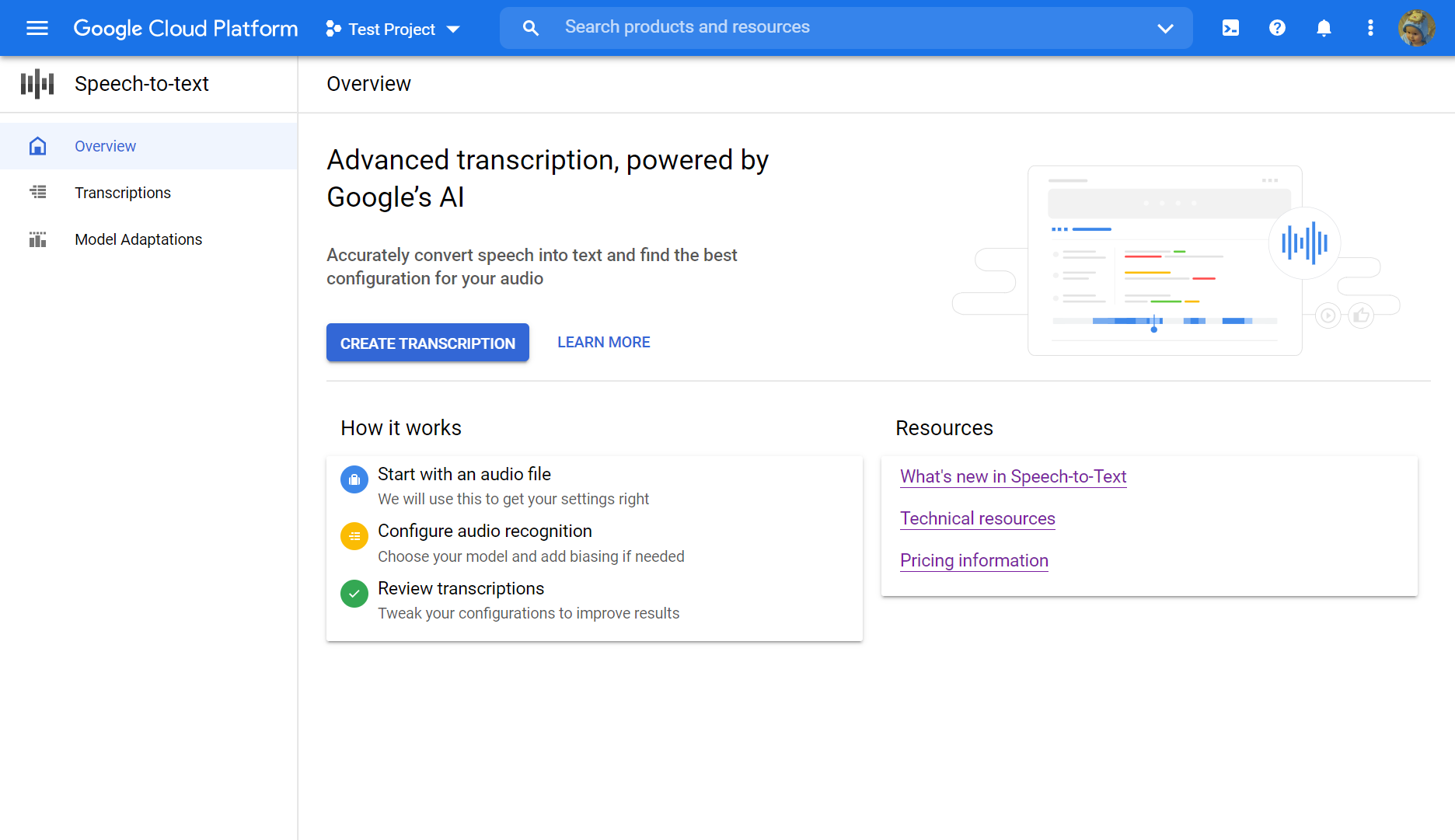

So, if the software is having trouble recognizing words, it could prompt the speaker to talk more slowly or clearly to achieve better results.īoth Microsoft and Googles’ platforms automatically detect when there are multiple speakers in a recording. Speech Service’s API also enables you to code real-time feedback. This is especially helpful if you frequently experience audio noise in a conference room or over a headset. Or, Speech Service supports acoustic models that you can use to cancel out noise in your recordings. You can feed the software a custom speech model to help you improve accuracy for a single speaker or for speakers with a regional accent. Microsoft Azure Speech Service is more feature-rich when it comes to getting your transcription exactly right. Head over to the detailed documentation and try walkthroughs to get started on using Speech-to-Text API.Google Cloud Speech-to-Text supports punctuation and recognizes multiple speakers in recordings. This will reduce the amount of time it takes to process the files, as the data does not need to be transferred between regions. To avoid this, it is recommended to choose a processing platform on GCP and that is in the same region as the GCS Bucket where the audio files are stored. Vertex Workbench is more efficient in processing audio files of increasing size, particularly when parallel processing is needed.įFmpeg requires that audio files be in the same location as the software, which can lead to performance issues when processing large audio files, especially when audio files are stored in a GCS Bucket. If this is a recurring task, building an image and using a serverless platform like Cloud Run are ideal. Converting a small number of files can be accomplished using command line or running a Python program locally. To determine how the audio source is encoded, run ffmpeg -i input.wav output will shown the encodingĮncoding conversion is a straightforward process the platform of choice for the conversion process is determined by the number and size of the audio files and the amount of conversion needed. Take a sample input audio source is encoded in “acelp.kelvin” which is not supported by STT API

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed